Written by James Day, RDCS

Artificial Intelligence (AI) is all the rage now. It’s where simulation was 20 years ago, and where point-of-care ultrasound (POCUS) was a decade earlier. What we are experiencing today is the application of AI applied to medical imaging and medical devices. So, let’s get into it.

First off, how does Webster’s Dictionary define AI?

Artificial Intelligence: 1) A branch of computer science dealing with the simulation of intelligent behavior in computers. 2) The capability of a machine to imitate intelligent behavior.

AI is a broad term that describes systems designed to simulate human intelligence or mimic human behavior. These systems can range from self-driving trucks to robotic arms on an assembly line.

There are two types of Artificial Intelligence

Machine learning is where a machine “self-learns” from observations or data to reach a decision. Typically, in medical imaging, machine learning algorithms learn in a supervised manner, where the model is taught with example images and corresponding expert annotations. There are other ways for the model to learn, such as unsupervised learning, semi-supervised learning, or reinforcement learning. Most machine learning algorithms are considered “shallow algorithms” that are only capable of learning simple tasks.

Deep learning is a specific type of machine learning. In 2012, a research group from the University of Toronto developed a deep learning algorithm that vastly outperformed earlier machine learning algorithms. Deep learning can be thought of as stacking many shallow machine learning models together, allowing the overall “deeper” model to learn more complex tasks. This method was made possible with advancements in deep learning training design and the advent of computational resources such as the graphics processing unit (GPU).

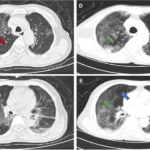

Now in 2020, you can find deep learning models automatically processing satellite imagery, generating artwork, understanding text, and, of course, interpreting ultrasound images.

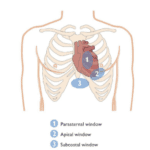

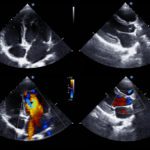

There are ultrasound devices available today that use AI to recognize certain aspects of an ultrasound image. Once specific algorithms are trained through thousands of image acquisitions and programmed, the ultrasound system is able to use this on-board intelligence to determine the quality of a particular image that the system is trained to analyze.

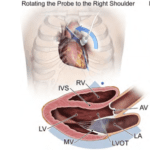

Additionally, the system performs calculations that, in the past, were performed by hand. Recall tracing the LV endocardial border architecture in systole and diastole and then applying the calculation for an ejection fraction. Now, AI determines the left ventricle’s contours in both diastole and systole with a mathematical calculation applied to predict an accurate ejection fraction (as opposed to “eyeballing it”).

You save time because you don’t have to trace the left ventricle manually to obtain the information. The AI tools are specifically becoming more helpful to those with limited experience in learning certain applications in medicine.

I don’t see AI taking over medical imaging jobs, but rather, empowering limited users so that now many more can utilize ultrasound in their clinical practice. This will also increase patient safety and reduce the steep learning curves that sonographers used to experience when training their eyes and hands in performing ultrasound exams.

Want more on AI and its impact on the future of POCUS? Listen to our podcast episode POCUS is a Pioneer in Ultrasound Artificial Intelligence.

Ready to get started on your POCUS journey? Check out our many certificates and certifications here.

Looking for additional inspiration? Sign up for our POCUS Post™ newsletter to receive monthly tips and ideas.

Access the full POCUS Learning Library for FREE!

Share a few details so we can tailor new content to your specialty and region.